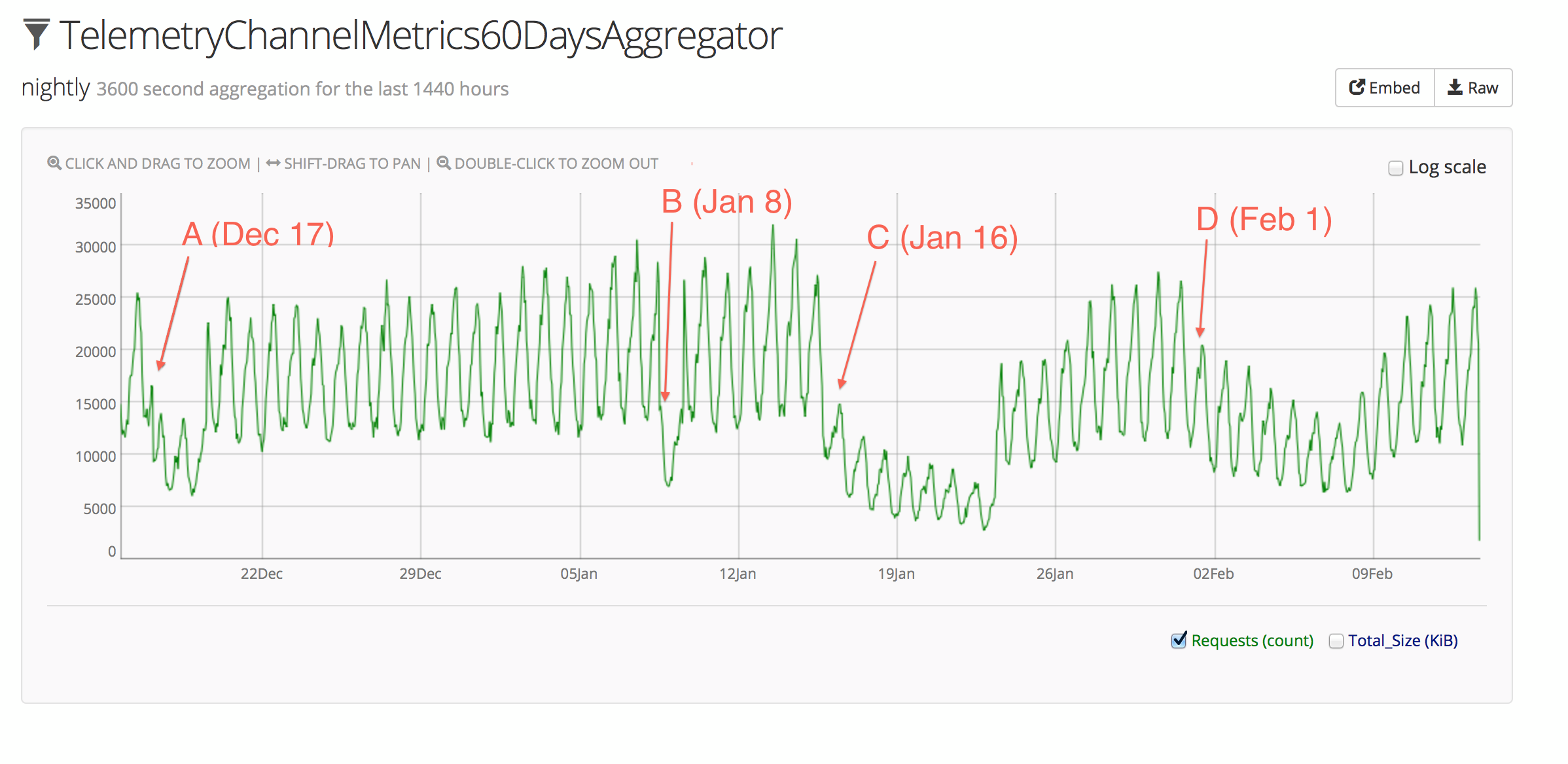

Here’s a graph of the submission rate from the nightly channel (where most of the action has taken place) over the past 60 days:

The x-axis is time, and the y-axis is the number of submissions per hour.

Points A (December 17) and B (January 8) are false alarms, and were just cases where the stats logging itself got interrupted, and so don’t represent data outages.

Point C (January 16) is where it starts to get interesting. In that case, Firefox nightly builds stopped submitting Telemetry data due to a change in event handling when the Telemetry client-side code was moved from a .js file to a .jsm module. The resolution is described in Bug 962153. This outage resulted in missing data for nightly builds from January 16th through to January 22nd.

As shown on the above graph, the submission rate dropped noticeably, but not anywhere close to zero. This is because not everyone on the nightly channel updates to the latest nightly as soon as it’s available, so an interesting side-effect of this bug is that we can see a glimpse of the rate at which nightly users update to new builds. In short, it looks like a large portion of users update right away, with a long tail of users waiting several days to update. The effect is apparent again as the submission rate recovers starting on January 22nd.

The second problem with submissions came at point D (February 1) as a result of changing the client Telemetry code to use OS.File for saving submissions to disk on shutdown. This resulted in a more gradual decrease in submissions, since the “saved-session” submissions were missing, but “idle-daily” submissions were still being sent. This outage resulted in partial data loss for nightly builds from February 1st through to February 7th.

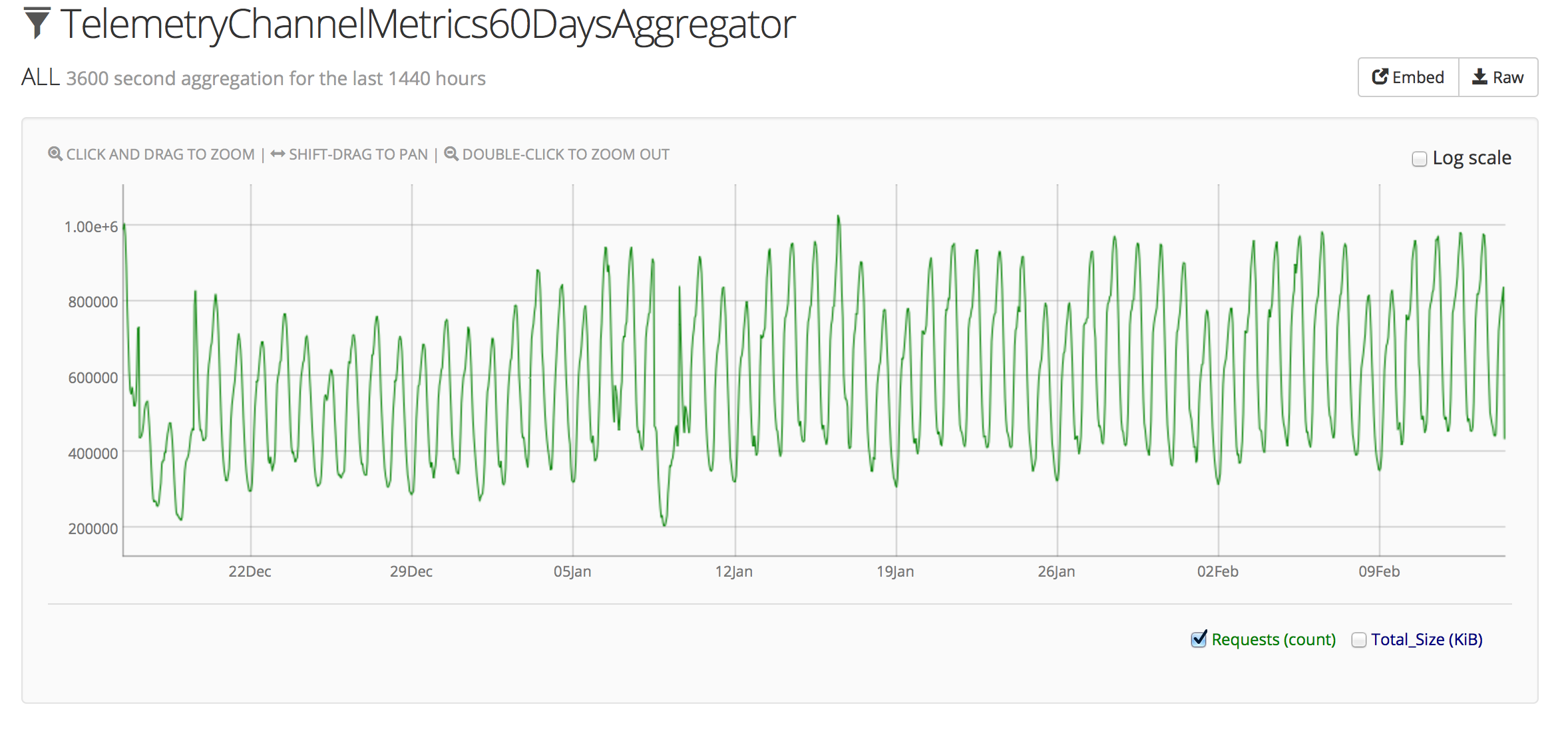

Both of these problems have been limited to the nightly channel, so the actual volume of submissions that were lost is relatively low. In fact, if you compare the above graph to the graph for all channels:

The anomalies on January 16th and February 1st are not even noticeable within the usual weekly pattern of overall Telemetry submissions (we normally observe a sort of “double peak” each day, with weekends showing about 15-20% fewer submissions). This makes sense given that the release channel comprises the vast majority of submissions.

The above graphs are all screenshots of our Heka aggregator instance. You can look at a live view of the submission stats and explore for yourself. Follow the links at the top of the iframes to dig further into what’s available.

There was a third data outage recently, but it cropped up in the server’s data validation / conversion processing so it doesn’t appear as an anomaly on the submission graphs. On February 4th, the revision field for many submissions started being rejected as invalid. The server code was expecting a revision URL of the form http://hg.mozilla.org/..., but was getting URLs starting with https://... and thus rejecting them. Since revision is required in order to determine the correct version of Histograms.json to use for validating the rest of the payload, these submissions were simply discarded. The change from http to https came from a mostly-unrelated change to the Firefox build infrastructure. This outage affected both nightly and aurora, and caused the loss of submissions from builds between February 4th and when the server-side fix landed on Februrary 12th.

So with all these outages happening so close together, what are we doing to fix it?

Going forward, we would like to be able to completely avoid problems like the overly-eager rejection of payloads during validation, and in cases where we can’t avoid problems, we want to detect and fix them as early as possible.

In the specific case of the revision field, we are adding a test that will fail if the URL prefix changes.

In the case where the submission rate drops, we are adding automatic email notifications which will allow us to act quickly to fix the problem. The basic functionality is already in place thanks to Mike Trinkala, though the anomaly detection algorithm needs some tweaking to trigger on things like a gradual decline in submissions over time.

Similarly, if the “invalid submission” rate goes up in the server’s validation process, we want to add automatic alerts there as well.

With these improvements in place, we should see far fewer data outages in Q2 and beyond.

Last minute update

While poking around at various graphs to document this post, I noticed that Bug 967203 was still affecting aurora, so the fix has been uplifted.